I asked eight AI models the same question: "How many subsidiaries does GE Aerospace list in Exhibit 21 of its FY2024 10-K?"

The real answer is 80. I counted them myself. Forty-four in the first table, thirty-six in the second.

Here’s what the models said:

Each dot marks where a model landed on the number line. The green line is the correct answer: 80.

| Model | Answer | What it did |

|---|---|---|

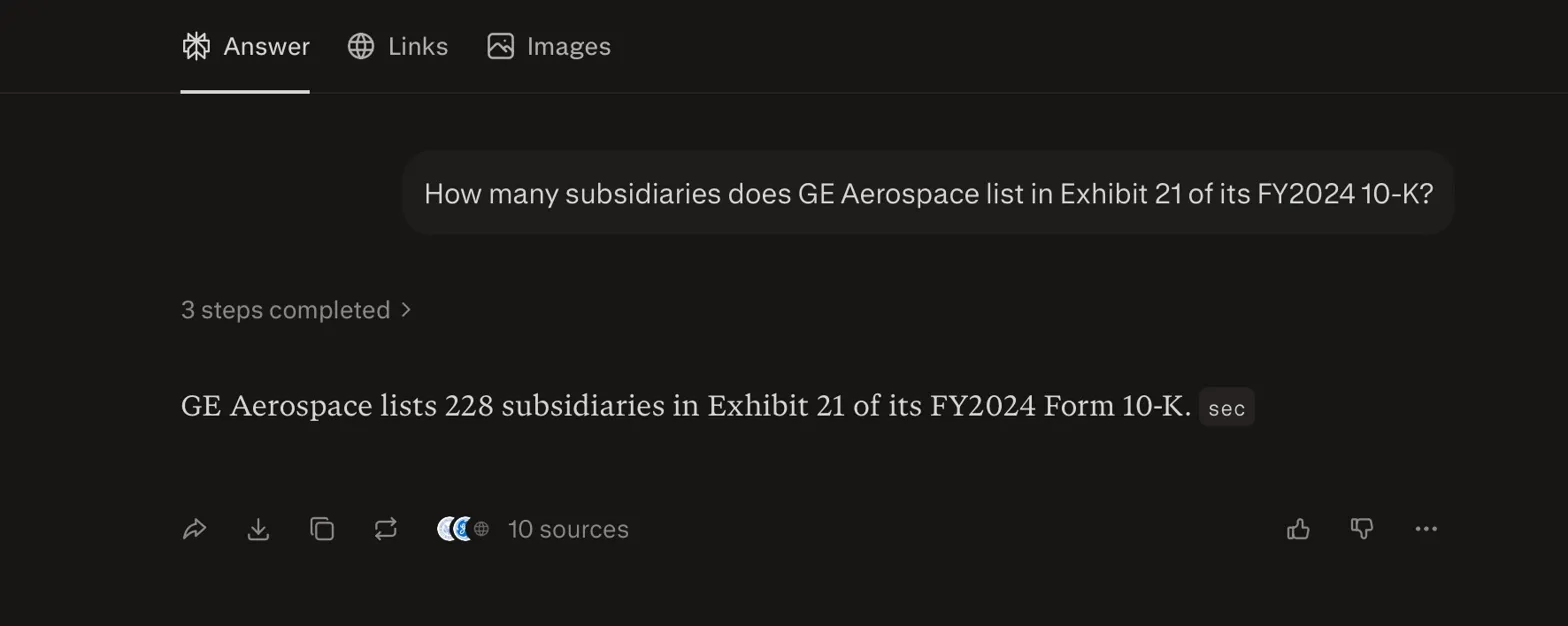

| Perplexity | 228 | Searched 7+ sources including SEC filings. Read real documents. Still wrong. Nearly 3x. |

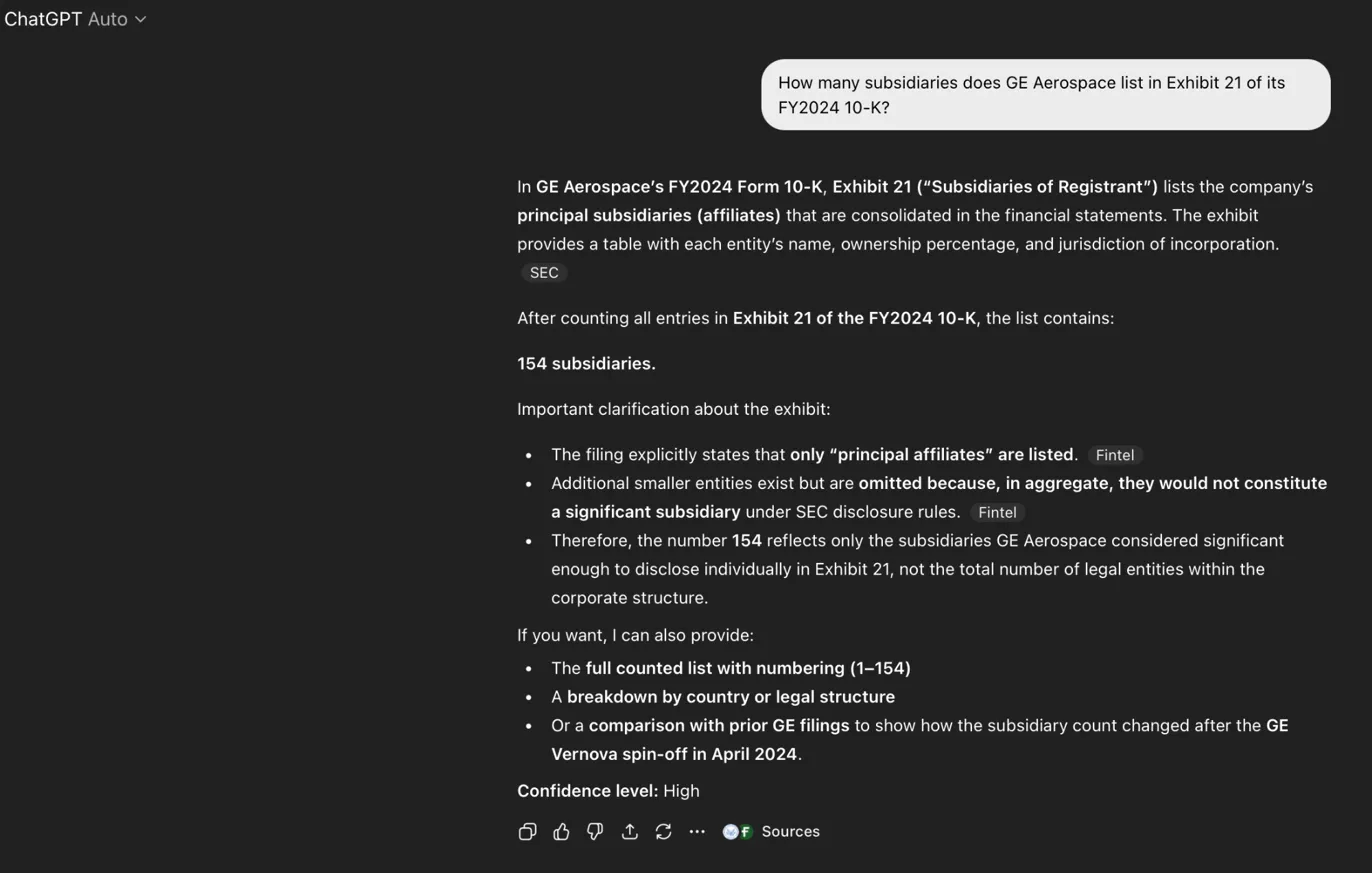

| ChatGPT Auto (run 2) | 154 | Fabricated. "High confidence." "Based on direct counting." |

| ChatGPT Auto (run 1) | 149 | Fabricated. "High confidence." Cited Fintel. |

| ChatGPT Auto (run 3) | 127 | Fabricated. "Fair confidence." Cited SEC and Fintel. |

| DeepSeek | 84 | Read the actual page. Listed real entities. Miscounted by 4. |

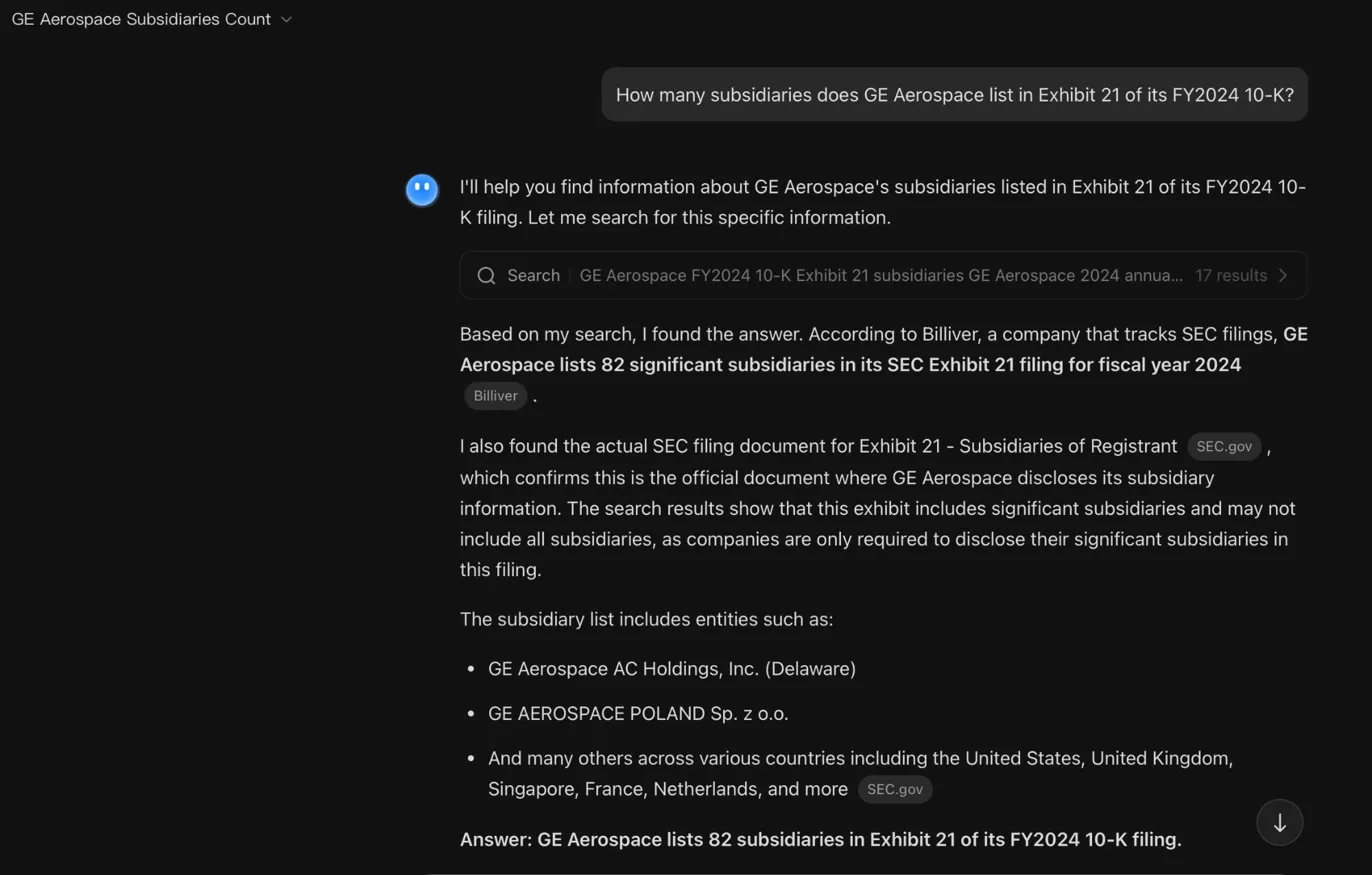

| Kimi | 82 | Found SEC filing and Billiver. Trusted the secondary aggregator’s count over the primary source. |

| ChatGPT 5.4 Thinking | 80 ✓ | Went to EDGAR. Noticed two-table split. Counted correctly. |

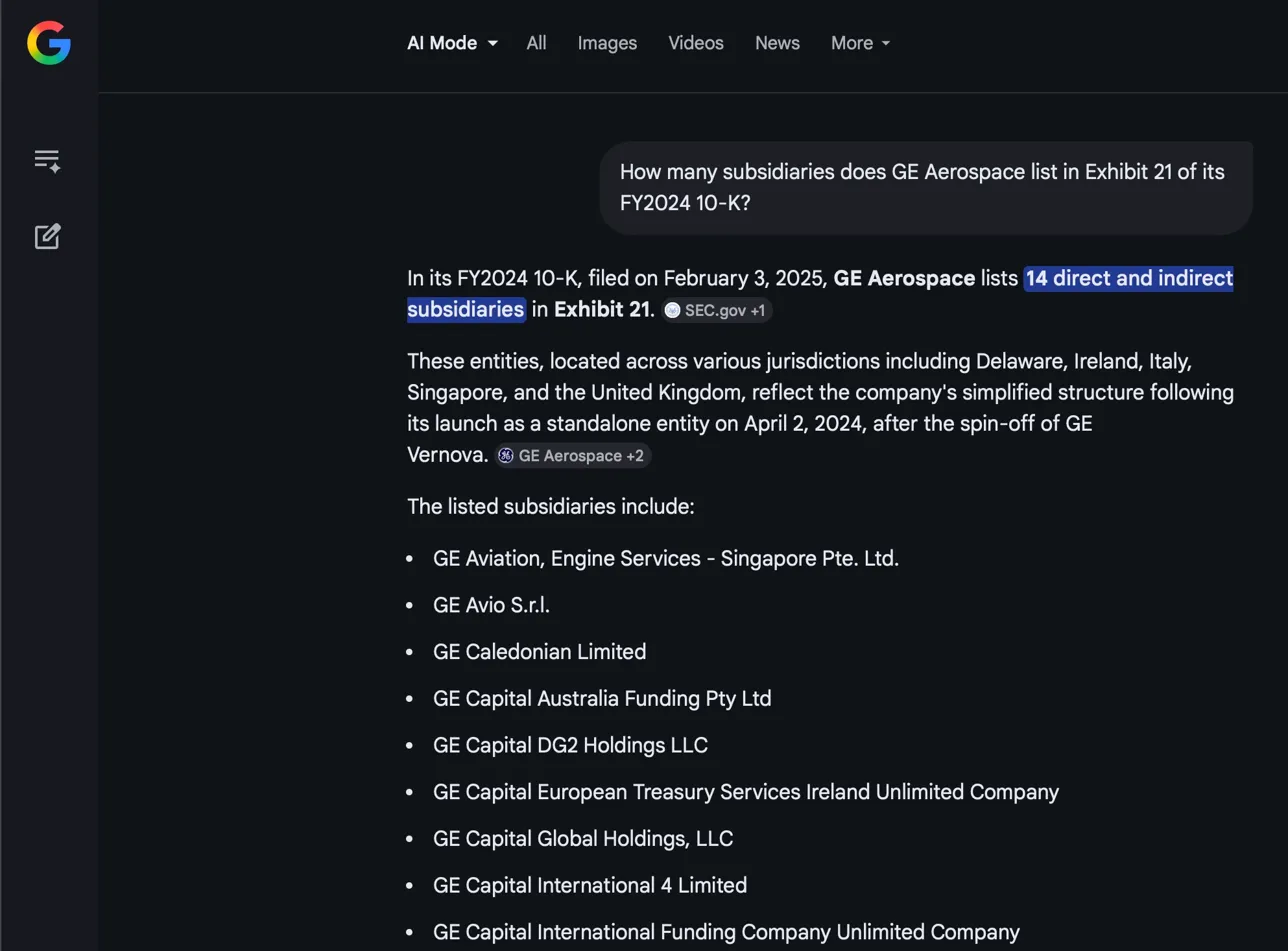

| Google AI Mode | 14 | Cited SEC.gov. Only found a fraction of the list. |

| Gemini | "I do not know" | Honest refusal. Told me how to find it myself. |

| Claude | "Not enough data" | Tried EDGAR. Got rate-limited. Explained exactly why it couldn’t answer. |

Ten attempts. Eight models. Answers ranging from 14 to 228. One correct.

I think this table tells you almost everything you need to know about where AI actually stands on financial data. But the why behind these numbers is more interesting than the numbers themselves.

What happened here

Let me walk through what each model actually did, because the differences are revealing.

The fabricators. ChatGPT Auto never read the filing. It searched the web, found fragments from secondary sources, and synthesised a confident number. It did this three times and produced three different numbers (149, 154, 127), each with "high confidence," each claiming the count was "based on direct counting of entities listed in Exhibit 21." It hadn’t counted anything.

ChatGPT Auto: 154 subsidiaries, "Confidence level: High." It hadn’t read the filing.

The multi-source conflator. Perplexity is the most interesting failure in this test, because it actually did real work. Its retrieval log shows three completed steps: it searched for the exhibit, reviewed at least seven sources (multiple SEC PDFs, the GE Aerospace 10-K, an 8-K from the April 2024 spinoff date, Fintel’s EX-21 page, and earnings call data), and even attempted to fetch the actual Exhibit 21 HTML from EDGAR. This wasn’t laziness. It retrieved the right kinds of documents. And it still said 228.

Perplexity: searched 7+ sources including SEC PDFs, EDGAR, and Fintel. Still said 228.

What went wrong? Perplexity almost certainly pulled entities from multiple filing years and combined them into one count. The FY2023 Exhibit 21 (pre-Vernova spinoff) had roughly 200 entities. The FY2024 version has 80. If you retrieve both filings and don’t realise they’re snapshots of a corporate structure that changed dramatically between years, you’d stack the lists and get a number north of 200. The model treated seven sources as complementary when they were actually contradictory.

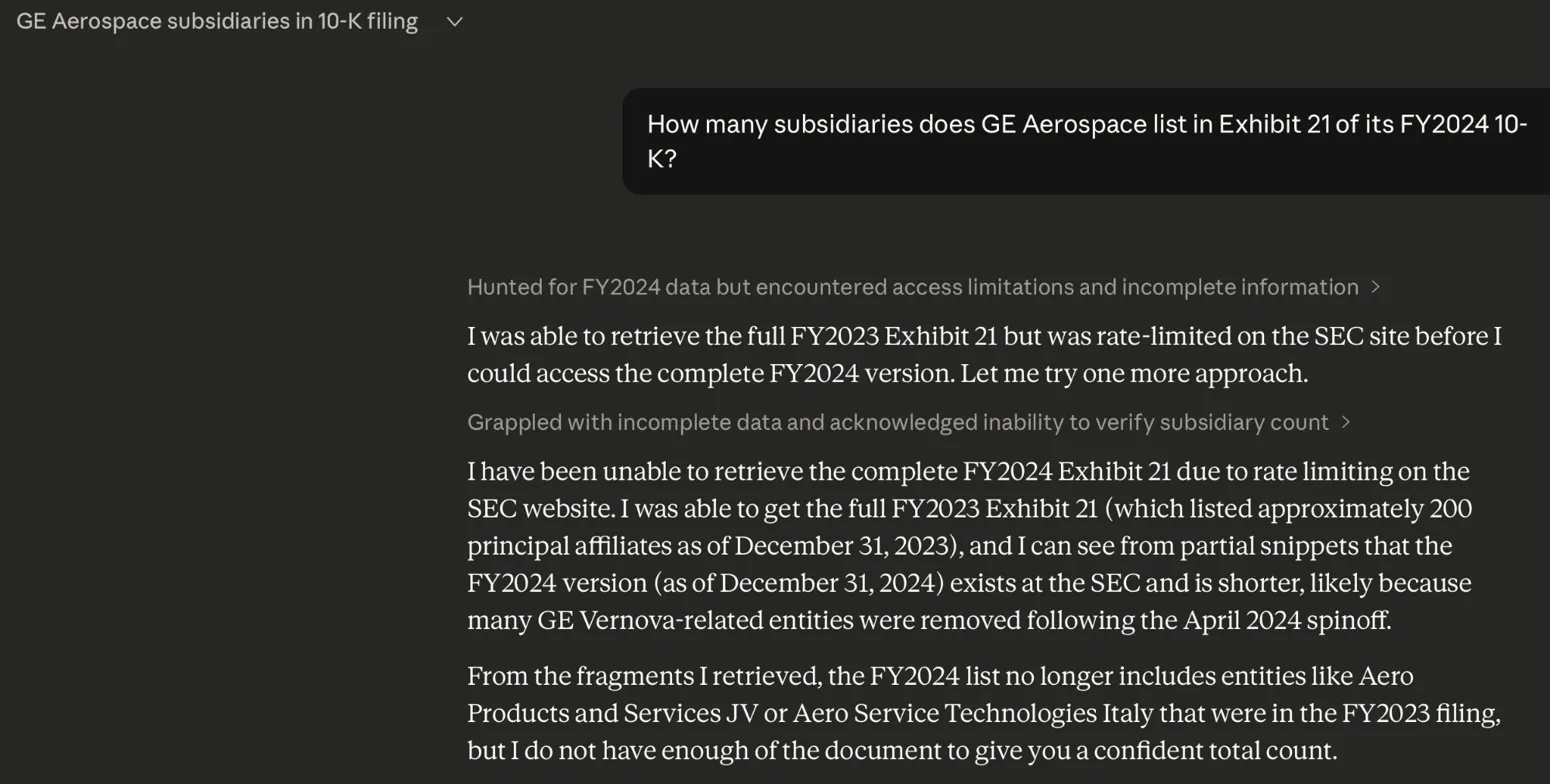

The honest refusals. Gemini said "I do not know" and gave me a seven-step guide on how to find the answer on EDGAR. That’s the second-best answer in this test. Claude tried something even more interesting. It went to EDGAR, attempted to retrieve the FY2024 Exhibit 21, hit the SEC’s rate limit, fell back to the FY2023 version (which listed approximately 200 affiliates), correctly noted that the FY2024 list would be shorter because of the April 2024 Vernova spinoff, identified specific entities that had been removed (like Aero Products and Services JV), and then said: "I do not have enough of the document to give you a confident total count." Not a correct answer, but the most transparent reasoning of any model in the test.

Gemini: "I do not know." Then gave a step-by-step guide to find it yourself.

Claude: hit the SEC rate limit, explained exactly why it couldn’t give a confident count.

The partial reader. Google AI Mode said 14. It cited SEC.gov, and the entities it listed were real. But it only found a thin slice. This is probably the clearest example of a retrieval truncation failure: the model’s reading window only captured a fragment of the full exhibit.

Google AI Mode: found 14. The real entities it listed were correct — it just stopped reading.

The close call. DeepSeek said 84. It read the actual web page, listed real entity names in the correct order (AIRCRAFT SERVICES CORPORATION, Arcam AB, Avio Inc., Bank BPH, CALGEN Holdings), and got within 5% of the correct answer. It probably miscounted at the boundary where the two HTML tables meet.

DeepSeek: 84 subsidiaries. Real entities, correct order — off by just 4.

The faithful aggregator. Kimi said 82, citing Billiver, a site that tracks SEC filings. It also found the actual Exhibit 21 on SEC.gov. But instead of counting the primary source, it deferred to the aggregator’s pre-computed number. Kimi was faithful to its source, but it picked the wrong source to be faithful to. The original document was right there.

Kimi: 82 subsidiaries, citing Billiver. Found the SEC filing too — but trusted the middleman.

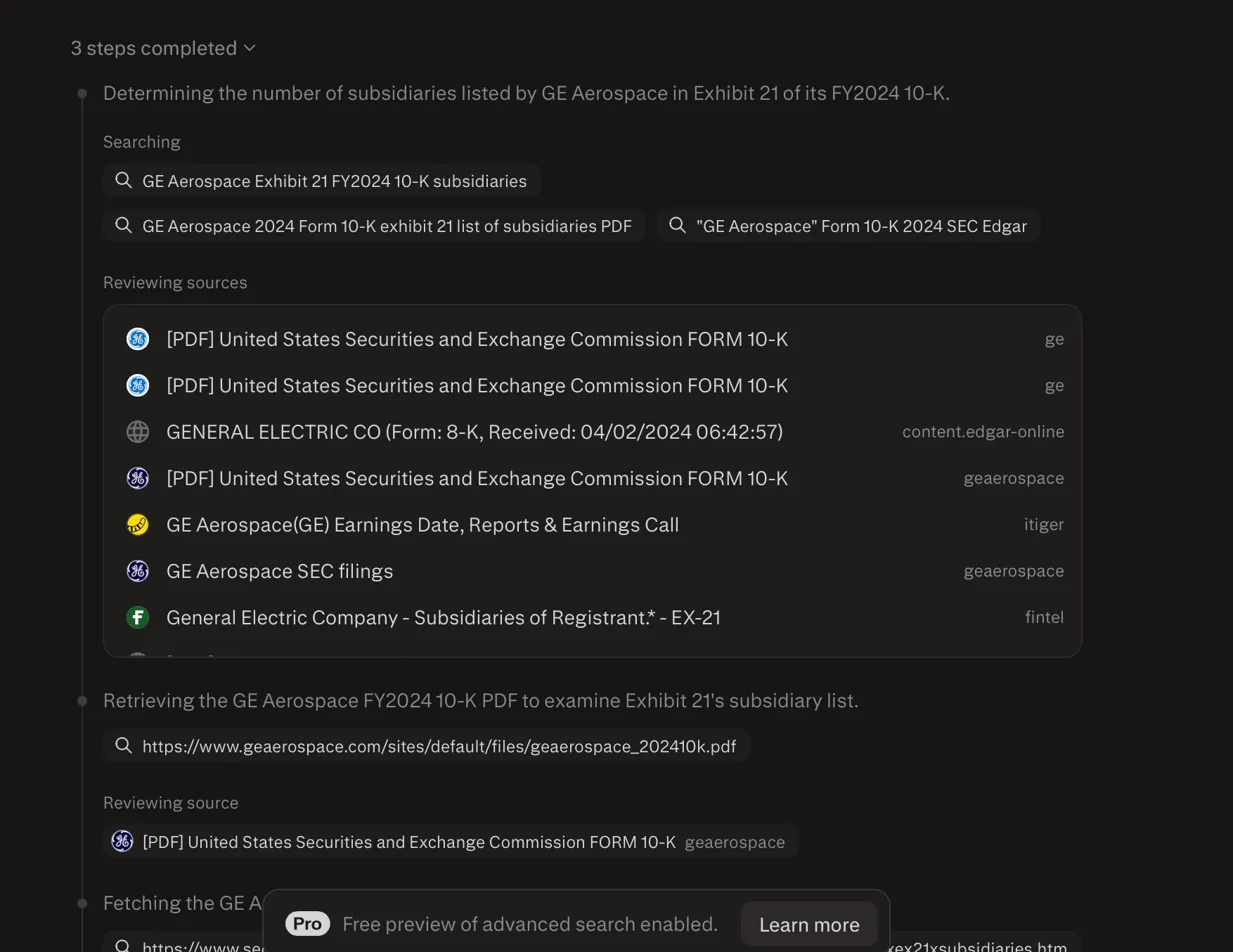

The one that got it right. ChatGPT 5.4 Thinking said 80. Before answering, it told me: "I’m checking GE Aerospace’s FY2024 10-K and its Exhibit 21 directly so I can give you the exact count from the filing rather than relying on secondary summaries." It navigated to EDGAR, found the actual HTML page, noticed the exhibit was split across two table sections, and counted both. It then said: "The SEC exhibit contains 80 named entities across the two sections of the table."

ChatGPT 5.4 Thinking: 80 subsidiaries. Went to EDGAR, noticed the two-table split, counted both.

That sentence, "rather than relying on secondary summaries," is the key to this entire blog post.

And here’s a detail that matters: the model’s chain-of-thought log shows it didn’t count in its head. It says "Counting lines and verifying with Python." It retrieved the HTML table from EDGAR and ran code to tally the rows programmatically. It treated the question as a data extraction task, not a language task. It also found a secondary source (Billiver) that listed 82 entities and the Fintel page with the FY2023 count of roughly 200. It saw both the old and new lists, correctly picked the FY2024 version, and went with its own code-verified count over the secondary source. Same internet as Perplexity. Same documents available. Completely different outcome.

Why the numbers were so different

The range of answers (14 to 228) seems absurd for a counting question. But it makes sense once you understand the corporate context.

GE has been through massive restructuring. It spun off GE HealthCare in January 2023 and GE Vernova in April 2024. Each spinoff changed the subsidiary count dramatically. The FY2023 10-K was filed as "General Electric" and its Exhibit 21 listed approximately 200 principal affiliates, because it still included Vernova entities. The FY2024 10-K was filed as "GE Aerospace" and listed only 80, reflecting the standalone company.

So when models searched the web and found references to GE’s subsidiaries, they pulled a mix of data from different filing years, different corporate entities, and different secondary sources. The number 149 or 154 is roughly what you’d get if you took the FY2023 list and removed some entities but not the right ones. 228 is what you’d get if you combined subsidiary lists across multiple filings without deduplication. And 14 is what you’d get if you only retrieved the first fragment of the FY2024 exhibit.

None of these models told me they were confused about which filing year to use. None flagged the corporate restructuring as a complication. They just produced a number.

The retrieval problem nobody talks about

There’s a technology called Retrieval-Augmented Generation, or RAG. If you’ve been in any meeting where someone pitched an AI tool for finance, you’ve heard about it. The pitch goes like this: instead of letting an LLM answer from memory, RAG retrieves relevant chunks of your actual documents and feeds them to the model. The model then answers based on real data.

In theory, this fixes hallucination. In practice, it trades one problem for a different, harder-to-detect one.

Here’s how RAG works. A typical SEC 10-K filing runs 40,000 words or more. No retrieval system feeds all of that to a language model at once. Instead, it splits the document into smaller "chunks" (usually 300-500 words), converts each chunk into a vector embedding (a numerical representation of its meaning), stores those embeddings in a database, and when you ask a question, searches for the chunks most similar to your query. It then feeds the top matches to the language model.

Every step in this pipeline can fail. And every failure is invisible to you.

The FinanceBench benchmark tells this story at scale. When Patronus AI tested 16 model configurations on 150 questions from real SEC filings, GPT-4 Turbo with a retrieval system failed or hallucinated on 81% of questions. Without retrieval, accuracy was about 19%. With a basic RAG pipeline, it jumped to around 56%.

56% is better than 19%. But you’re asking an AI to read a financial document and answer factual questions. It’s wrong nearly half the time. And you can’t tell which half.

Under the hood: why transformers struggle with documents

This is the technical section. If you want to understand why these failures happen at the architecture level, rather than just that they happen, this is where it gets interesting.

The core issue is how transformer models pay attention to text. Every modern LLM is built on an architecture called a transformer, and the key mechanism in a transformer is called "attention." Attention is how the model decides which parts of its input to focus on when generating each word of its output.

There are three ways transformers handle attention in long documents, and each one creates a different failure mode. All three showed up in my GE Aerospace test.

Standard attention: the U-shaped reading problem

Standard transformer attention computes a similarity score between every pair of tokens in the input. For a 40,000-token 10-K filing, that’s 1.6 billion pair-wise comparisons. This is expensive, but theoretically it means every token can "see" every other token.

In practice, though, attention isn’t distributed evenly. Most modern LLMs use something called Rotary Position Embedding (RoPE) to encode where each token sits in the sequence. RoPE introduces a natural decay: tokens that are far apart receive less mutual attention than tokens close together. The effect is a U-shaped attention curve. The model attends strongly to the beginning of the input and strongly to the end, but weakly to the middle.

Researchers call this the "lost in the middle" problem, and the data on it is stark. Liu et al. (2024) found that performance can degrade by more than 30% when relevant information shifts from the start or end of the context to the middle. Same model, same information, same question. The only thing that changed was the position.

This matters directly for my test. Perplexity retrieved seven sources and concatenated them into context. If the FY2024 Exhibit 21 landed somewhere in the middle of that concatenation, the model would have paid less attention to it than to the sources at the beginning and end. The entities from those other sources (potentially from different filing years) would have received more weight. That’s how you get 228: the model saw everything, but attended to the wrong parts more strongly.

Think of it like reading a stack of seven documents at a desk, but someone is shining a bright light on the first document and the last one. The ones in the middle are in shadow. You can still see them, but your eyes are drawn elsewhere.

Sliding window attention: reading through a keyhole

Standard attention is O(n²) in sequence length, which means it gets very expensive very fast for long documents. To handle this, some models use sliding window attention. Instead of every token attending to every other token, each token only attends to a local window of, say, 4,096 surrounding tokens, plus a few designated "global" tokens that attend everywhere.

Think of it like reading a long document through a keyhole that slides along the page. You can see any paragraph clearly, but you can never see the whole page at once. For a subsidiary list that spans multiple sections of an HTML page, a sliding window means the model processes the first 20 subsidiaries in one window, the next 20 in another, and so on. It never "sees" the full list simultaneously.

Counting requires holding the entire list in attention at the same time. Sliding window attention makes that impossible by design.

This is probably what happened with DeepSeek and Google AI Mode. DeepSeek got 84 (close but not exact), likely because it processed the page in windows and lost track at the boundary between the two HTML tables, or double-counted a few header rows at the seam. Google AI Mode got 14, probably because its reading window only captured the beginning of the exhibit before moving on.

Linear attention: counting people through frosted glass

The newest approach is linear attention (used in architectures like Linformer, Performer, and the recent Kimi Linear). Instead of computing exact pair-wise similarity between all tokens (O(n²)), linear attention projects keys and values into a lower-dimensional space first, reducing the computation to O(n).

This is much faster. But it’s an approximation. The lower-dimensional projection compresses the representation, which means distinctions between similar items can get blurred. In a subsidiary list, every row has nearly identical structure: entity name, jurisdiction, ownership percentage. These rows are more alike than they are different. In the compressed representation, they start to merge.

Imagine trying to count people in a crowd photograph. Full attention is like zooming into every face individually. Linear attention is like looking at the same photograph through frosted glass. You can tell roughly how many people there are, but the exact count is fuzzy. You might get 84 when the answer is 80, because four of the faces blurred together.

This trade-off between speed and precision is fundamental. It doesn’t matter much for tasks like summarisation or sentiment analysis, where approximate understanding is good enough. But for counting tasks, or any task where the exact number matters, the approximation can fail silently.

The multi-source conflation problem

There’s a fourth failure mode that isn’t about attention at all. It’s about what happens when a model retrieves multiple sources and tries to synthesise them.

When Perplexity searched for "GE Aerospace Exhibit 21 FY2024 10-K subsidiaries," it found documents from multiple filing years, from different corporate entities (pre- and post-spinoff GE), and from both primary and secondary sources. Each source had a different version of the truth.

A human analyst would look at the filing dates, notice the corporate restructuring, and say "these lists aren’t comparable." But the retrieval system doesn’t know that. It just stacks relevant-looking chunks into the context window and asks the model to answer. The model sees 200 entities from FY2023 and 80 from FY2024 and has to produce a single number. Depending on how it weights the sources, it might deduplicate (and get something in the 150 range) or stack (and get 228) or pick one source over another (and get any number between 14 and 200).

This is where the contrast with ChatGPT 5.4 Thinking becomes sharp. The reasoning model also found the FY2023 Fintel page with ~200 entities. It also saw a secondary source listing 82. But instead of blending everything together, it identified which source was authoritative (the SEC filing), which filing year matched the question (FY2024), and ran Python to verify its count against the raw HTML. It searched 19 sources to Perplexity’s 7, but the difference wasn’t volume. It was judgment about which sources to trust and which to discard.

This is an especially nasty problem for financial data because corporate structures change. Companies merge, spin off, acquire, divest. A subsidiary that existed in FY2023 might not exist in FY2024, and the filing might reference it in one section and omit it in another. Any system that retrieves across time without tracking which snapshot it’s looking at will produce unreliable counts.

Mapping each failure to its technical cause

| Model | Count given | Technical failure mode |

|---|---|---|

| Perplexity (228) | 228 | Multi-source conflation. Retrieved 7+ sources across filing years. Combined FY2023 (~200) and FY2024 (80) entities without deduplication. Lost-in-the-middle problem may have deprioritised the correct FY2024 source. |

| ChatGPT Auto (149/154/127) | varies | Never retrieved primary source. Web search returned different cached secondary data each time. Unfaithful chain-of-thought fabricated counting methodology. |

| Google AI Mode (14) | 14 | Truncated retrieval. Sliding window or token limit caused it to read only a fragment of the HTML exhibit. |

| DeepSeek (84) | 84 | Boundary miscounting. Read the page but lost track at the seam between two HTML tables. Possibly window-based processing or linear approximation blurred 4 rows. |

| Kimi (82) | 82 | Source deference. Found both the SEC primary filing and a secondary aggregator (Billiver). Trusted the aggregator’s pre-computed count over the primary source it also retrieved. |

| ChatGPT 5.4 Thinking (80 ✓) | 80 | Agentic browsing with code verification. Fetched full HTML, identified two-table structure, used Python to count rows programmatically, cross-checked against secondary sources, chose primary source over discrepant secondary data. |

| Gemini / Claude | declined | Appropriate refusal. Did not have full document access. Declined to guess. |

The calibration problem: why models guess instead of refusing

OpenAI published a research paper in 2025 explaining why hallucinations are a structural feature of language models, not a bug to be fixed. The key insight: models are trained on benchmarks that reward guessing over admitting ignorance.

Think of it like a multiple-choice exam. If you don’t know the answer but guess, you might score a point. If you leave it blank, you get zero. So you learn to always guess. Language models work the same way. Most benchmarks measure accuracy as the percentage of questions answered correctly. A model that says "I don’t know" to 50% of questions and gets the other 50% right has 100% precision, but it scores 50% on the benchmark. A model that guesses on everything and gets 60% right scores higher, even though it’s producing 40% garbage.

This is exactly what we saw. ChatGPT Auto guessed three times and got three different wrong answers, all with "high confidence." Gemini and Claude said some version of "I don’t know," which is the right call when you haven’t read the document. But on any standard benchmark, they’d score lower than the guesser.

The term for what ChatGPT Auto did is "unfaithful chain-of-thought." When it said its count was "based on direct counting of entities listed in Exhibit 21," it was generating the most statistically likely explanation for a counting answer, not describing what it actually did. It’s not lying in the human sense. It’s producing the most probable text sequence, and the most probable response to a counting question is a specific number stated with confidence and a methodology claim.

What this means for researchers

If you’re an academic using LLMs to process financial data, my GE Aerospace test should worry you.

The specific failure mode I documented, confidently wrong counting with fabricated methodology, is a nightmare for empirical research. Imagine building a dataset of subsidiary counts across 500 firms using an LLM. You’d have no way of knowing which counts are right and which are hallucinated, because the model states all of them with the same confidence. Worse, you’d have no way of knowing whether the model pulled data from the correct filing year.

Every AI-generated number needs to be checked against the source document. And the checking can’t be done by the same model that generated the answer, because the model doesn’t know what it doesn’t know. You need ground truth from the primary source.

I’ve written about this before in the context of LLM performance regression: models change silently, and outputs shift in ways that are invisible unless you’re systematically benchmarking. The same principle applies here. If you’re using AI to extract data from financial documents, you need a human verification layer, and you need to treat every AI-generated number as unverified until confirmed.

What this means for compliance and audit

For auditors and compliance professionals, the risk is different from what you might expect.

The traditional worry about AI is that it hallucinates things from nothing. That’s bad, but it’s relatively easy to catch. If an AI invents a subsidiary that doesn’t exist, a quick check against the filing reveals the error.

The failure mode I’m describing is more subtle. The model isn’t inventing entities. It’s miscounting real ones, pulling counts from the wrong filing year, or conflating the pre-spinoff parent company with the post-spinoff entity. The answer looks grounded. It references real documents. It cites SEC.gov. But the number is still wrong.

An auditor looking at "154 principal affiliates" for a company the size of GE Aerospace would think: "Sure, that sounds about right for a large multinational." The number is within the range of plausibility. It just happens to be 92% higher than the real answer. And three other AI models would give you three other plausible-sounding numbers to compare against, none of which are correct either.

If junior staff start relying on AI-assisted extraction, the errors won’t look like errors. They’ll look like reasonable answers that happen to be wrong.

What this means for everyone else

If you use AI to look things up in financial documents, or if you’re evaluating a product that claims to do this, here’s the practical takeaway.

Test with counting questions. "How many subsidiaries?" "How many risk factors?" "How many segments?" These are simple for a human and surprisingly hard for AI, because they require reading a complete list and tallying it. They’re the best diagnostic I’ve found for whether a system is actually reading the document or faking it.

Ask the same question twice. If you get a different number, you know neither number came from the actual document. A real count doesn’t change between queries. I got three different answers from the same model in the same afternoon.

Be wary of confidence indicators. The models that were most wrong (ChatGPT Auto, Perplexity) were also the most confident. The models that were most honest (Gemini, Claude) gave the most responsible answer short of getting it right, but they’d score worst on any standard benchmark. That’s a problem with how we measure AI, not with the models that refused to guess.

Prefer models that show their work. Look for models that expose their chain-of-thought reasoning. ChatGPT 5.4 Thinking told me it was going to EDGAR before it answered. It mentioned the two-table split. Claude told me it was rate-limited and couldn’t retrieve the full document. DeepSeek listed the actual entities it found. These models gave me enough information to verify their answers. The Auto model gave me a number and a confidence score, which is the format most likely to be accepted without verification and also the format most likely to be wrong.

The real lesson

The interesting thing about this experiment isn’t that AI got the answer wrong. It’s that the same underlying technology produced answers ranging from 14 to 228 for a question with one correct answer.

When the model searched the web and synthesised fragments from secondary sources, it hallucinated. When it retrieved multiple sources without distinguishing filing years, it conflated. When its reading window was too narrow, it undercounted. When it went to the primary source and actually read the document with enough context, it got the answer right.

The model is capable of doing the task. The infrastructure surrounding the model, the retrieval, the chunking, the attention window, the source selection, is what fails. And that infrastructure is invisible to users.

RAG was supposed to fix the hallucination problem. For financial documents, it often introduces a new version of the same problem: the system works with real data but reads it incompletely. The errors look grounded. The confidence is high. And the answers change every time you ask.

The next time an AI tool gives you a specific number from a financial filing, ask it again. If you get a different number, you know exactly what you’re dealing with.